In the world of biosignals, the most complete insights come from combining multiple modalities. While eye tracking provides a precise map of visual attention and external focus, EEG adds a layer of depth by capturing simultaneous neural activity.

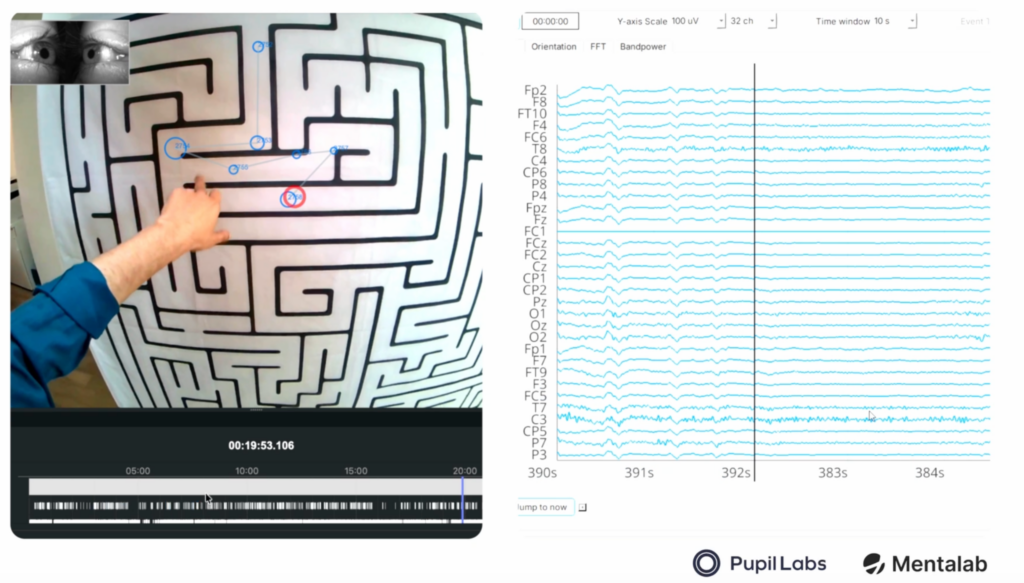

By integrating the Mentalab Explore Pro’s research-grade EEG with Pupil Labs Neon mobile eye tracking, we have achieved precise, millisecond-level synchronization between EEG data and saccadic eye movements – even within the dynamic environment of a mobile maze-solving task.

Why Integrate EEG and Eye Tracking?

The fundamental premise of this multimodal approach is that the two modalities capture distinct, complementary aspects of a participant’s state. While eye tracking (ET) provides a map of visual attention by recording gaze coordinates and pupil dilation to identify which stimuli drive a response, EEG captures neural dynamics through electrophysiological changes on the scalp. This provides a high-temporal-resolution window into cognitive load and engagement that visual data alone cannot reach. By utilizing multimodal classifiers that combine these data streams, researchers can improve overall recognition performance by an average of 8.12% compared to unimodal classifiers [4]. Furthermore, this integration is essential for resolving data ambiguity and correcting for temporal noise, resulting in a much more robust understanding of the subject’s internal state.

Case Study: Decoding the Labyrinth

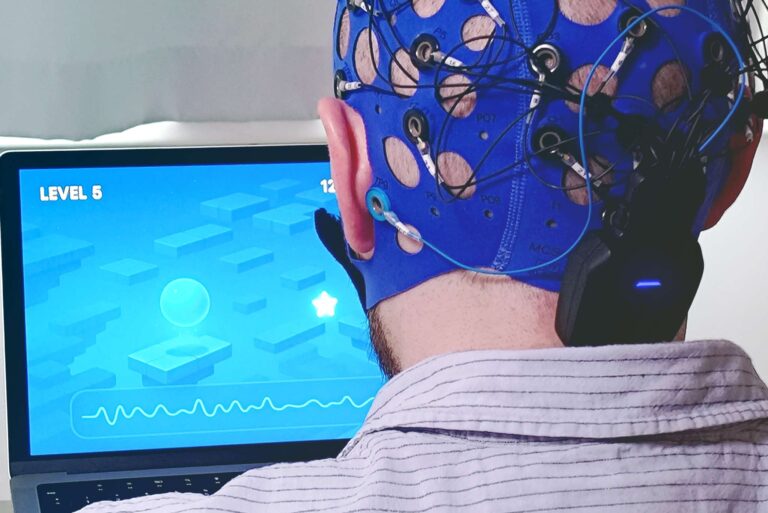

To see this synergy in action, we acquired co-registered EEG and eye-tracking data from a participant visually navigating a maze while equipped with both Mentalab Explore Pro and Pupil Labs Neon. This hardware integration allowed us to map their experience with extreme precision. Using Pupil Labs Neon, we successfully identified specific fixations and saccades as the subject scanned the environment for the correct path. Simultaneously, the Mentalab Explore Pro monitored brain activity; as the labyrinth grew more complex, we observed neural shifts indicating increased mental effort. By utilizing Mentalab Hypersync, these separate data streams were aligned with millisecond accuracy, ensuring that every neural event was mapped directly to the exact spatial coordinate viewed by the subject at that moment.

Watch the Video Here: Mentalab X Pupil Labs

Technical Setup: The Simple Workflow

The center of our collaboration is a streamlined, high-performance integration. Here is the step-by-step workflow to achieve synchronized results using the Lab Streaming Layer (LSL) protocol:

- Connect the eye tracker with the phone, and make sure pushing to LSL is enabled.

- Connect Explore Pro with computer or study phone.

- Push to LSL from Explore Desktop/Explore Mobile app.

- Record data in Lab Recorder (this pulls both streams into a single .xdf file)

- To get additional information on fixation/saccades, record data in parallel on the Pupil Labs phone for offline post-processing.

Learn more about the setup: https://wiki.mentalab.com/integrations/hardware/pupillabs/

Download Explore Desktop: https://github.com/Mentalab-hub/explore-desktop-release/releases/tag/v1.5.1

Download Neon Player: https://github.com/pupil-labs/neon-player

Expanded Research Use Cases

The potential applications for synchronized EEG and eye-tracking are vast, spanning numerous fields to provide deep insights into human cognition and behavior. In the field of neuromarketing, researchers can now predict “buy vs. no buy” choices with accuracies as high as 84.01% by employing advanced multimodal ensemble learning frameworks [3]. This is supported by evidence showing that fixations leading to clicks exhibit statistically greater pupil sizes than those without, providing a physiological marker for consumer intent [2].

Beyond consumer behavior, this integration is crucial for monitoring cognitive load in high-stakes environments. By combining neural markers with visual scanning patterns, systems can detect when a user is overwhelmed even if their external performance appears stable [4]. These insights enable the development of “attention-aware” XR and VR environments that automatically adjust difficulty or content based on a user’s real-time mental effort. For example, simulations can dynamically simplify user interfaces or reduce visual clutter during periods of high cognitive load to maintain safety and efficiency [4].

Interested in this line of research? Our mobile and easy-to-use devices make conducting complex multimodal studies simpler than ever, allowing you to take your research out of the lab and into the real world.

Selected References

- Larsen, O. F. P., Tresselt, W. G., Lorenz, E. A., Holt, T., Sandstrak, G., Hansen, T. I., Su, X., & Holt, A. (2024). A method for synchronized use of EEG and eye tracking in fully immersive VR. Frontiers in Human Neuroscience, 18, 1347974.

- Slanzi, G., Balazs, J. A., & Velásquez, J. D. (2017). Combining eye tracking, pupil dilation and EEG analysis for predicting web users click intention. Information Fusion, 35, 51-57.

- Usman, S. M., Khalid, S., Tanveer, A., Imran, A. S., & Zubair, M. (2025). Multimodal consumer choice prediction using EEG signals and eye tracking. Frontiers in computational neuroscience, 18, 1516440.

- Vortmann, L. M., Ceh, S., & Putze, F. (2022). Multimodal eeg and eye tracking feature fusion approaches for attention classification in hybrid bcis. Frontiers in Computer Science, 4, 780580.